The Ideogram API too many requests error (HTTP 429) occurs when your application exceeds the allowed request limit within a specific time window. This guide breaks down why it happens and how to fix it using proven techniques like rate limiting, exponential backoff, caching, and request optimization. By implementing these strategies, developers can prevent API throttling, improve performance, and ensure long-term application stability.

🔑 Key Takeaways

- Error 429 occurs due to API rate limiting mechanisms

- Reducing request frequency helps prevent throttling

- Exponential backoff improves retry success rates

- Caching minimizes unnecessary API calls

- Monitoring usage ensures long-term API stability

Struggling with Ideogram API 429 Error? Here’s What’s Happening

If you’re working with the Ideogram API too many requests error, chances are you’ve already experienced the frustration—requests failing, responses delayed, and your application behaving unpredictably.

You send what feels like a normal number of API calls… and suddenly, boom—HTTP 429: Too Many Requests.

Sound familiar?

This isn’t just a minor glitch. It can break user experiences, slow down your application, and even cost you users. Whether you’re building an AI-powered image generator or integrating Ideogram into your workflow, understanding this error is critical.

In this guide, you’ll learn:

- Why the Ideogram API error 429 happens

- How to fix it step-by-step

- Best practices to avoid API throttling forever

Let’s break it down.

What is the Ideogram API Too Many Requests Error?

Understanding HTTP 429 Status Code

The 429 Too Many Requests error is a standard HTTP response code. It simply means:

“You’ve sent too many requests in a given time frame. Please slow down.”

APIs use this mechanism to protect their servers from overload.

Think of it like a busy restaurant. If too many customers arrive at once, the kitchen can’t handle it efficiently—so they ask some people to wait.

That’s exactly what the API is doing.

Why Ideogram API Shows This Error

The Ideogram API rate limit issue exists for a reason:

- Prevent server overload

- Ensure fair usage among users

- Maintain system stability

When your app exceeds predefined request limits (per minute/hour), the API temporarily blocks further requests.

Common Causes of API Rate Limit Errors

Understanding the root cause is half the solution.

Here are the most common reasons behind the API rate limit exceeded error:

1. Sending Too Many Requests Quickly

Burst traffic without delays is the #1 reason.

2. No Delay Between API Calls

Calling APIs in tight loops without pauses triggers throttling.

3. Poor API Integration

Unoptimized code can send redundant requests.

4. Multiple Users at Once

High concurrency increases request volume dramatically.

5. Lack of Caching

Fetching the same data repeatedly instead of storing results.

👉 These issues fall under API throttling and request limit violations, which lead directly to error 429.

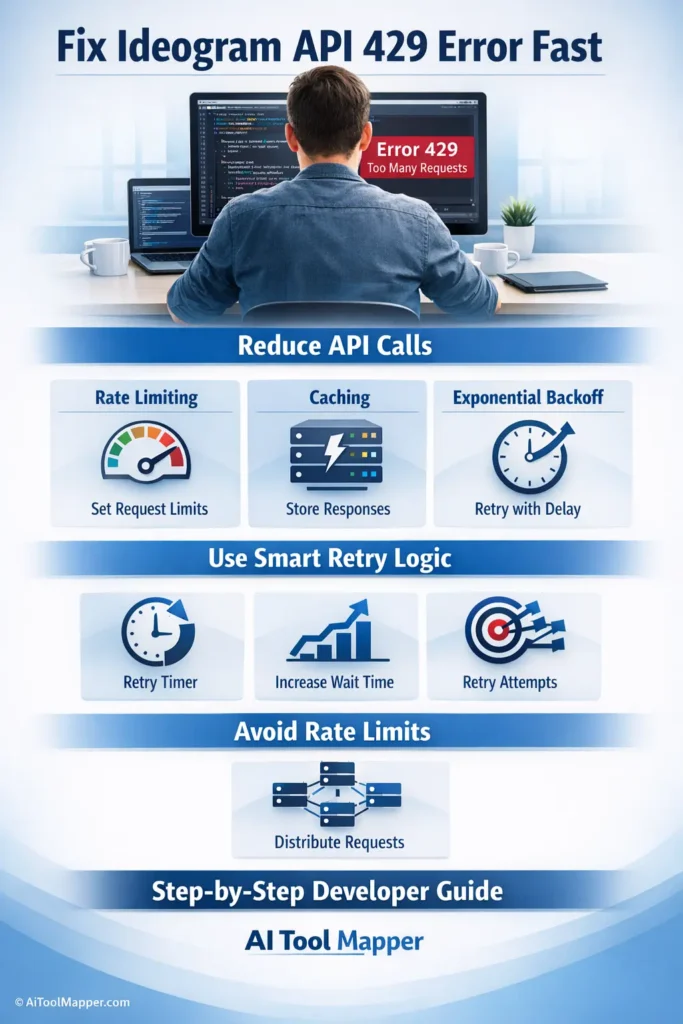

🛠️ How to Fix Ideogram API Too Many Requests Error

Let’s get practical. Here’s how to fix it.

1. Implement Rate Limiting

Control how frequently your app sends requests.

Instead of:

- 100 requests instantly

Use:

- 10 requests per second

Example Approach:

- Use a request queue

- Limit calls using timers

👉 This is the foundation of any API throttling error solution.

2. Use Exponential Backoff Strategy

Instead of retrying immediately, wait longer after each failure.

Example:

- First retry → wait 1 second

- Second retry → wait 2 seconds

- Third retry → wait 4 seconds

This reduces pressure on the server and increases success rates.

👉 This is a proven exponential backoff API example used by companies like Google.

3. Add Smart Retry Logic

Don’t blindly retry.

Instead:

- Retry only on 429 errors

- Respect the

Retry-Afterheader

This is a key part of API error handling best practices.

4. Cache API Responses

Why request the same data again?

Store responses locally using:

- Memory cache

- Redis

- CDN

This reduces API calls and helps fix API request limit exceeded issues.

5. Optimize API Calls

Ask yourself:

- Can multiple requests be combined?

- Are all requests necessary?

Tips:

- Use batch requests

- Avoid duplicate calls

- Fetch only required data

👉 This directly improves how to optimize API usage.

6. Upgrade API Plan

Sometimes, the problem isn’t your code—it’s your limits.

If you’re hitting caps frequently:

- Upgrade to a higher-tier plan

- Get higher request quotas

💡 Best Practices to Avoid API Rate Limiting

Prevention is always better than fixing errors later.

Here’s how to stay safe:

✔ Monitor API Usage

Track requests in real-time.

✔ Use Background Queues

Process requests asynchronously.

✔ Implement Request Batching

Combine multiple calls into one.

✔ Use Caching Layers

CDNs and edge caching reduce load.

✔ Graceful Error Handling

Show fallback UI instead of crashing.

👉 These strategies are essential for API optimization and performance improvement.

📈 Advanced Techniques for Developers

Want to go deeper? These techniques are used in production systems.

Token Bucket Algorithm

Controls request flow by adding tokens at a fixed rate.

- Each request consumes a token

- No tokens → request denied

Efficient and widely used.

Queue Management Systems

Tools like RabbitMQ or Kafka help:

- Queue requests

- Process them gradually

Load Balancing API Requests

Distribute traffic across servers to avoid overload.

Async Processing

Instead of blocking execution:

- Use async calls

- Improve speed and efficiency

🔍 Real Example of Fixing Error 429

Let’s imagine a real-world scenario.

🚫 Before Fix:

- App sends 50 requests instantly

- Result → 429 errors

✅ After Fix:

- Implement rate limiter (5 requests/sec)

- Add caching

- Use exponential backoff

Result:

- 0 errors

- Faster response time

- Better user experience

⚖️ Ideogram API Limits Explained

While exact limits may vary:

Typical Limits:

- Requests per minute

- Requests per hour

Free vs Paid:

- Free → lower limits

- Paid → higher limits

How to Check Usage:

- Dashboard metrics

- API response headers

FAQs

What does “Too Many Requests” mean in API?

It means your application has exceeded the allowed number of API calls within a specific time frame. The server temporarily blocks additional requests to prevent overload and ensure fair usage across all users.

How do I fix error 429 quickly?

Reduce request frequency immediately, add delays between calls, and implement retry logic using exponential backoff. Also, check if your API plan limit has been exceeded.

What is API rate limiting?

API rate limiting is a mechanism that restricts the number of requests a user can send within a certain time window to prevent server overload and ensure fair resource distribution.

Can caching prevent API errors?

Yes, caching significantly reduces repeated API calls by storing previously fetched data, which helps avoid hitting rate limits and improves performance.

What is exponential backoff?

It’s a retry strategy where the delay between retries increases exponentially after each failed attempt, reducing server load and improving the chances of successful requests.

How many requests does Ideogram API allow?

The exact number depends on your plan. Free tiers usually have strict limits, while paid plans offer higher request quotas per minute or hour.

Why does my API keep failing?

Your API may fail due to excessive requests, poor error handling, lack of caching, or hitting rate limits frequently without implementing proper retry strategies.

Is error 429 permanent?

No, it’s temporary. Once the rate limit window resets, you can send requests again. However, repeated violations can lead to stricter restrictions.

How do I monitor API usage?

You can monitor usage via API dashboards, logs, or tools like analytics platforms that track request counts and error rates in real-time.

What tools help prevent API throttling?

Tools like rate limiters, caching systems (Redis), monitoring dashboards, and queue management systems help manage API usage efficiently and prevent throttling.

Conclusion

The Ideogram API too many requests error isn’t just an obstacle—it’s a signal.

It tells you:

👉 Your app is growing

👉 Your API usage needs optimization

By implementing:

- Rate limiting

- Exponential backoff

- Caching

- Smart retry logic

You not only fix the issue—you build a stronger, more scalable application.

If you’re exploring more AI tools and optimization strategies, platforms like AI Tool Mapper can help you discover better integrations and performance-focused solutions.